Video backup to Google Storage account

At api.video we understand that sometimes, you want to make sure that your videos are safe and secure. Although api.video has a backup and disaster recovery plan, it’s up to you to decide if you want an extra backup resource or if you would like to use api.video just to transcode your videos but eventually store them on a different resource.

That’s why, we’ve created this guide so you can store the videos you’ve already transcoded with api.video on a file storage resource.

How it’s done?

This guide will explain how you can store your transcoded videos on Google Storage account. In short, all you need to do is run a small script that will copy the videos from api.video to Google Storage account. The videos will be kept on api.video as well, however, if you wish to delete them you can do so by leveraging the DELETE /videos endpoint, more information can be found here.

Preparation

What we will need to run the script?

- api.video API key, you can find the information on how to retrieve the API key in the Retrieve your api.video API key guide

- Preparing to the backup to Google account, you can follow the below steps. You will need to create a service account for the Storage account

- api.video Cold Storage script,

- Node.js and npm, you can find the installation instructions here

- Typescript, you can find the installation instructions here

Setting up service account and getting the credentials

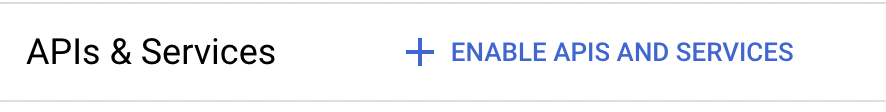

- On Google Cloud Platform, navigate to the menu and select

APIs and services - Select

Enabled APIs and services

- Click on

+ ENABLE APIS AND SERVICES

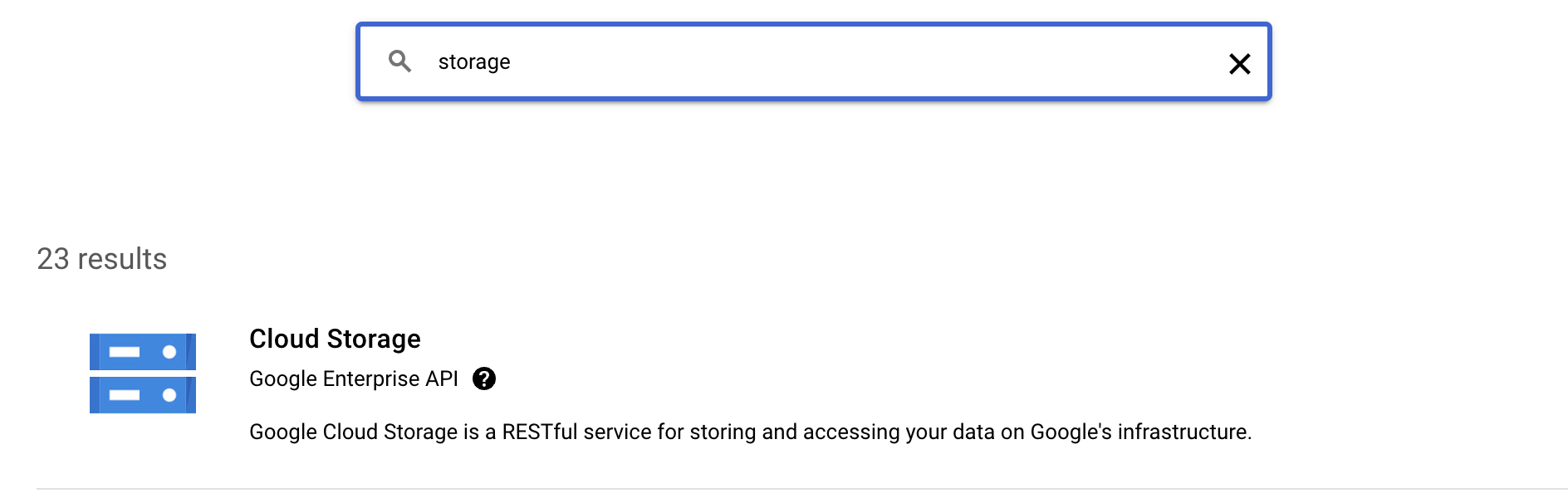

- Search for

Storageand selectStorage Cloud

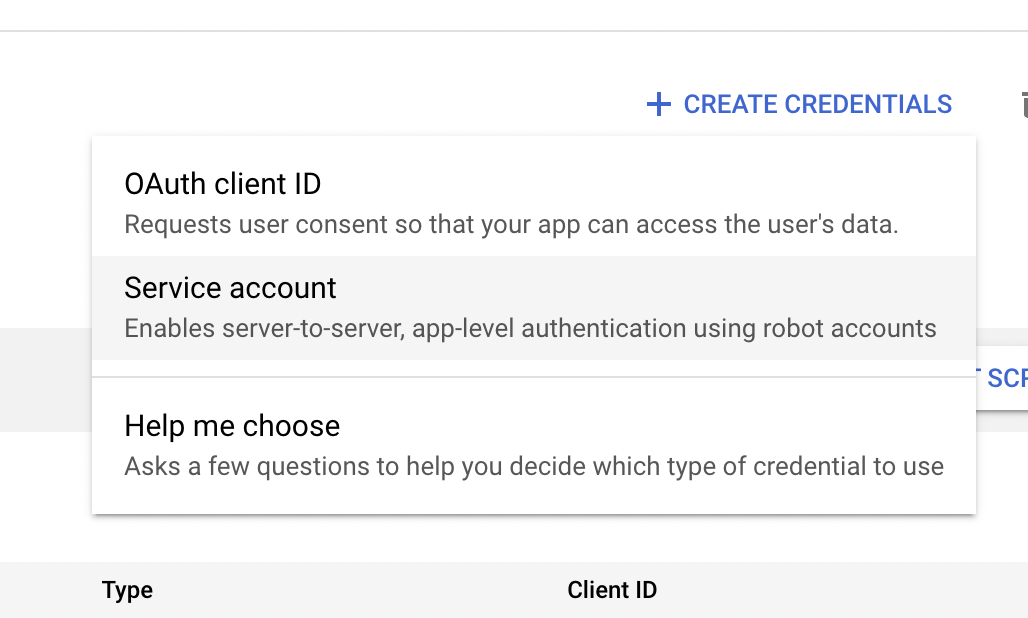

- If the API is not enabled, click on

Enable API, andManage - In the

API services detailsclick on+ Create credentials - Select

Service account

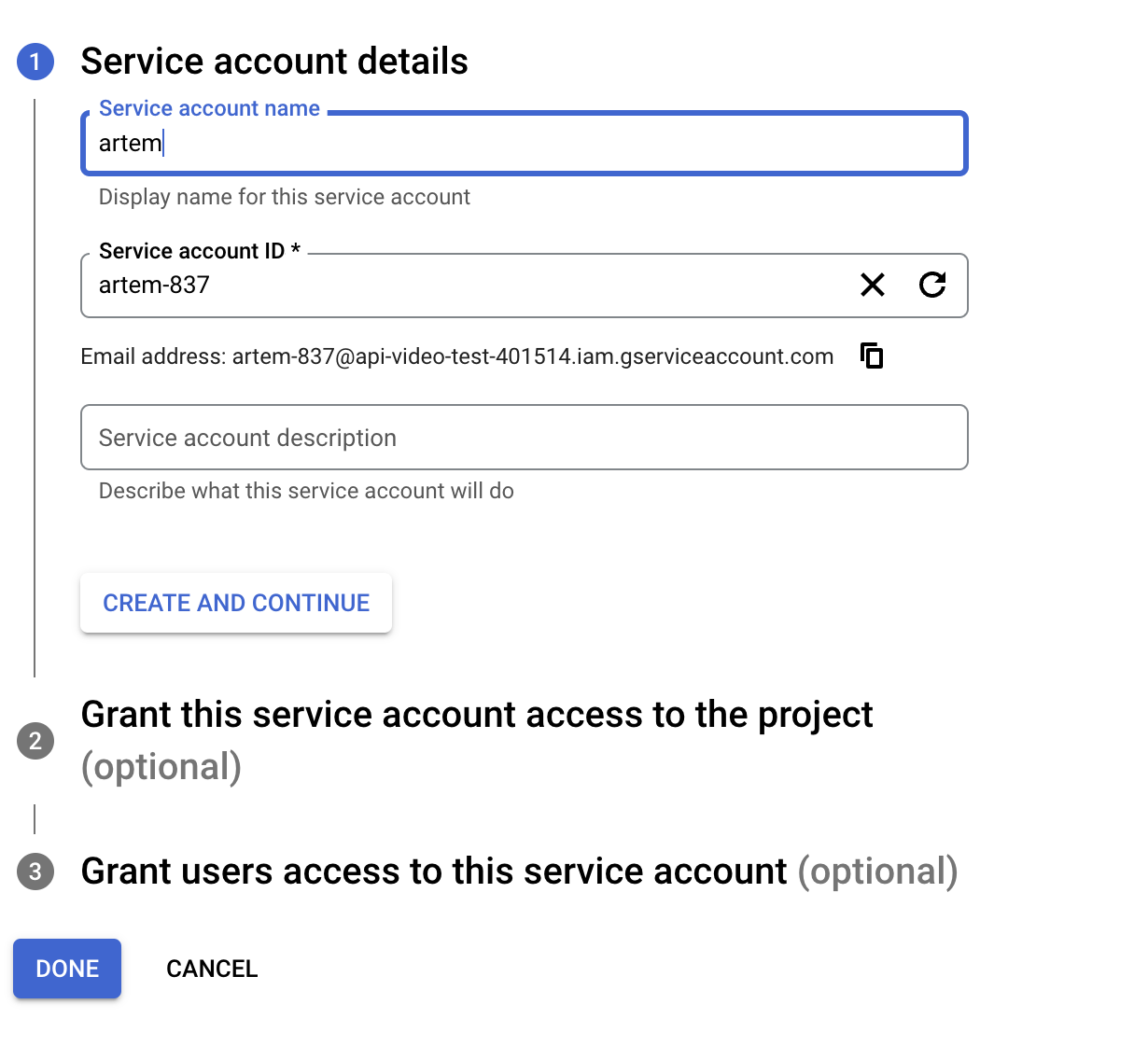

- Fill out the details in the

Create Service Accountscreen, click onCreate and Continue

- In the optional pane

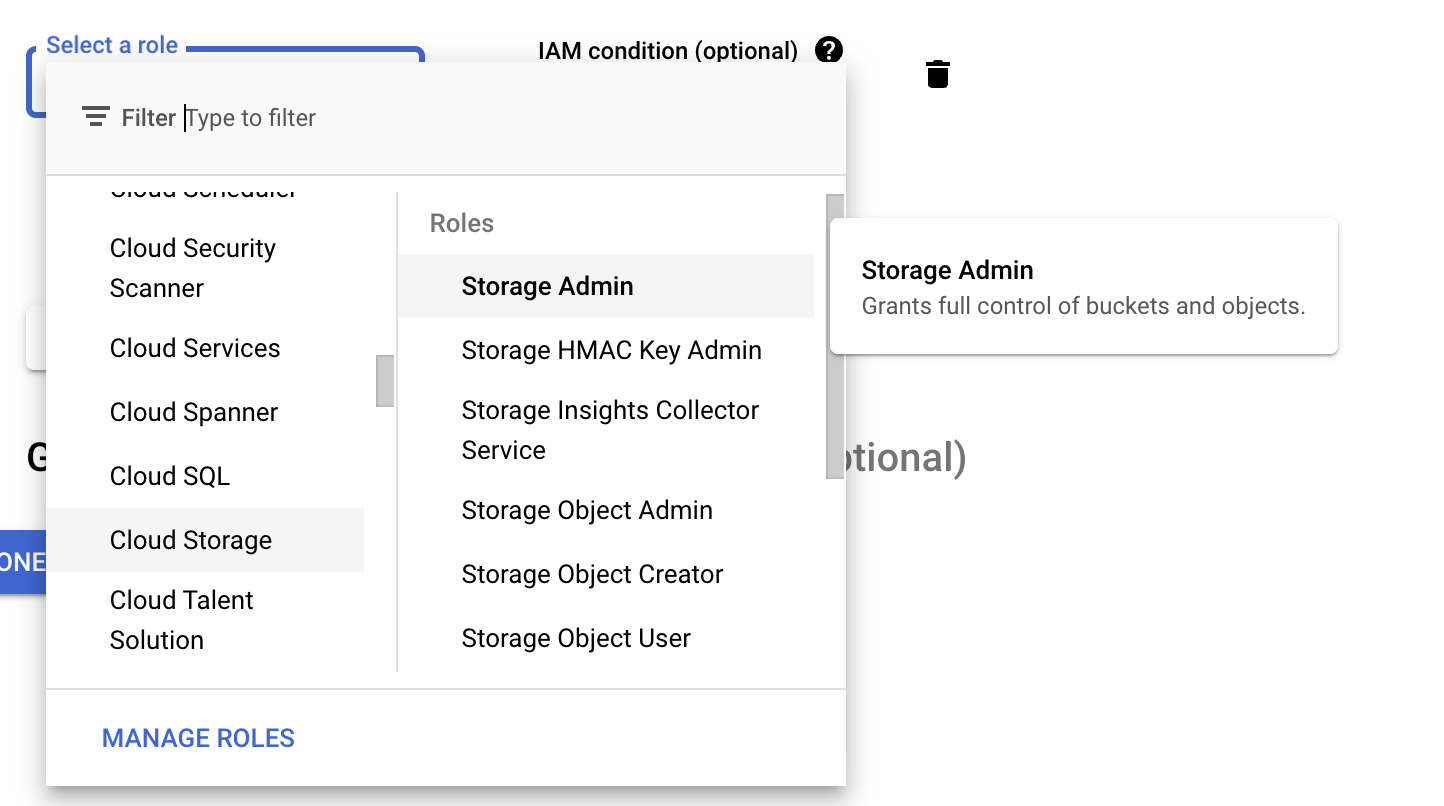

Grant this service account access to the projectgrant the Storage Admin role and click continue

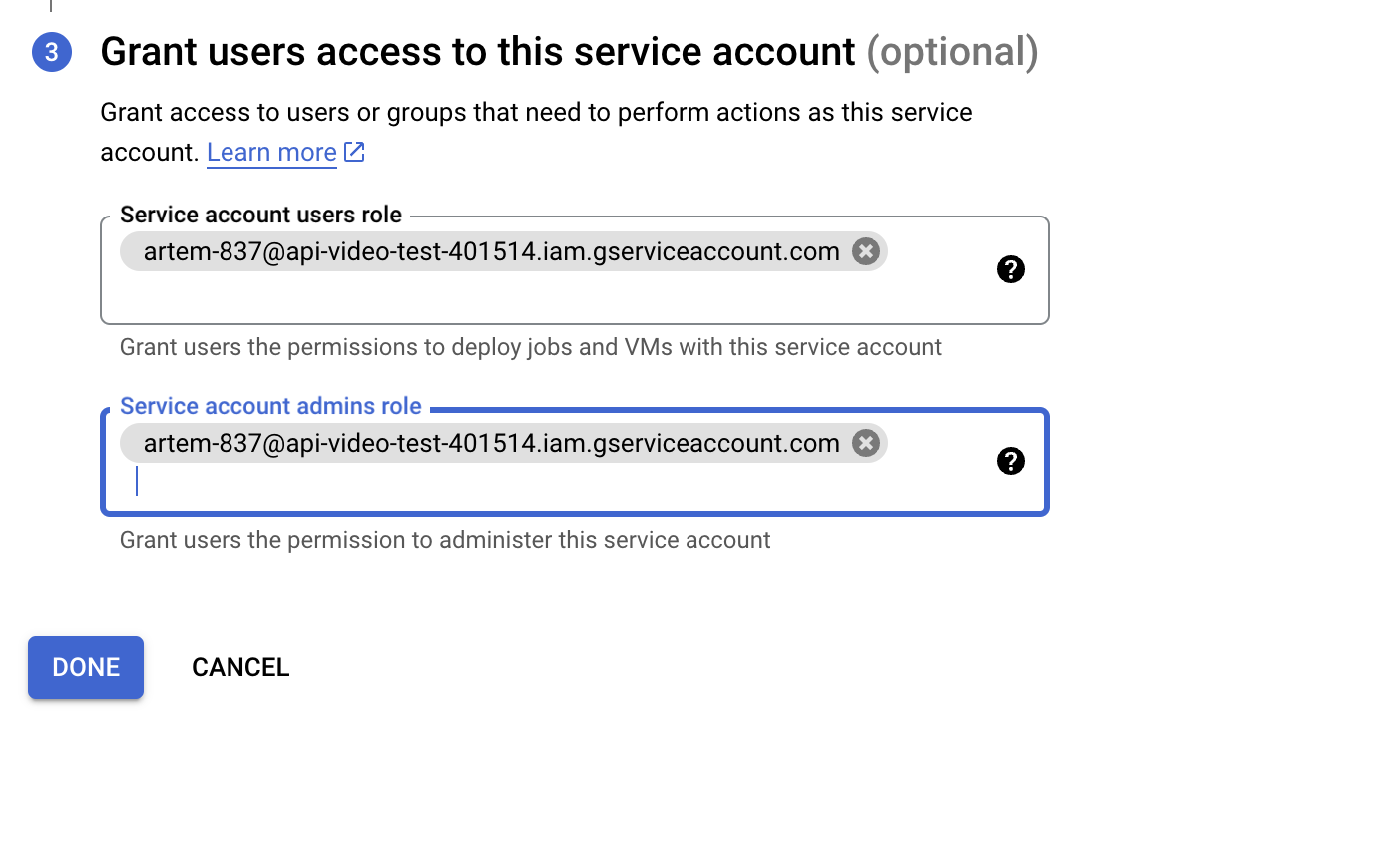

- Add the user to the service account and click

Done

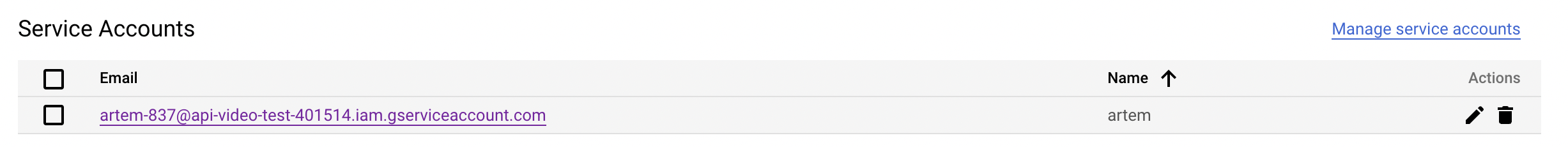

- On the

API/Service detailsclick on the Service account that was just created, under Service Accounts

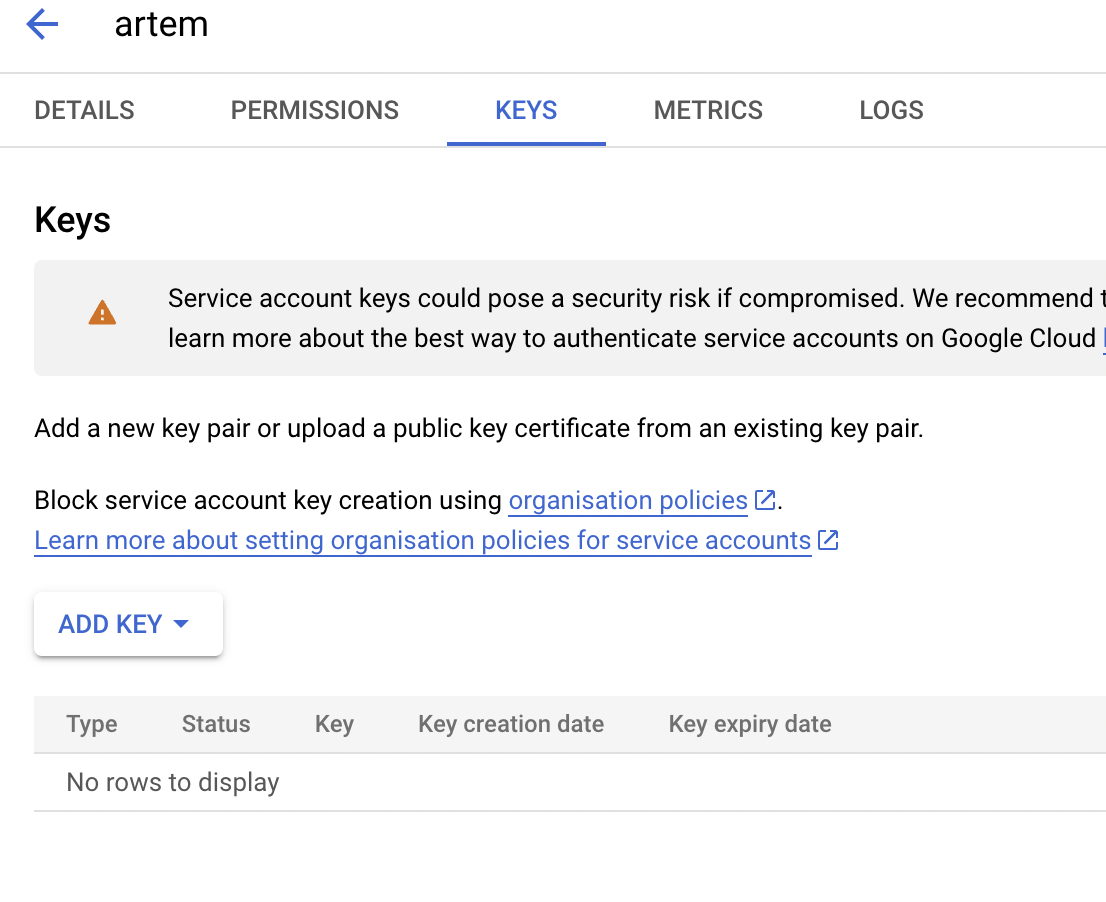

- In the Service Account screen, navigate to the

Keystab

- Click on

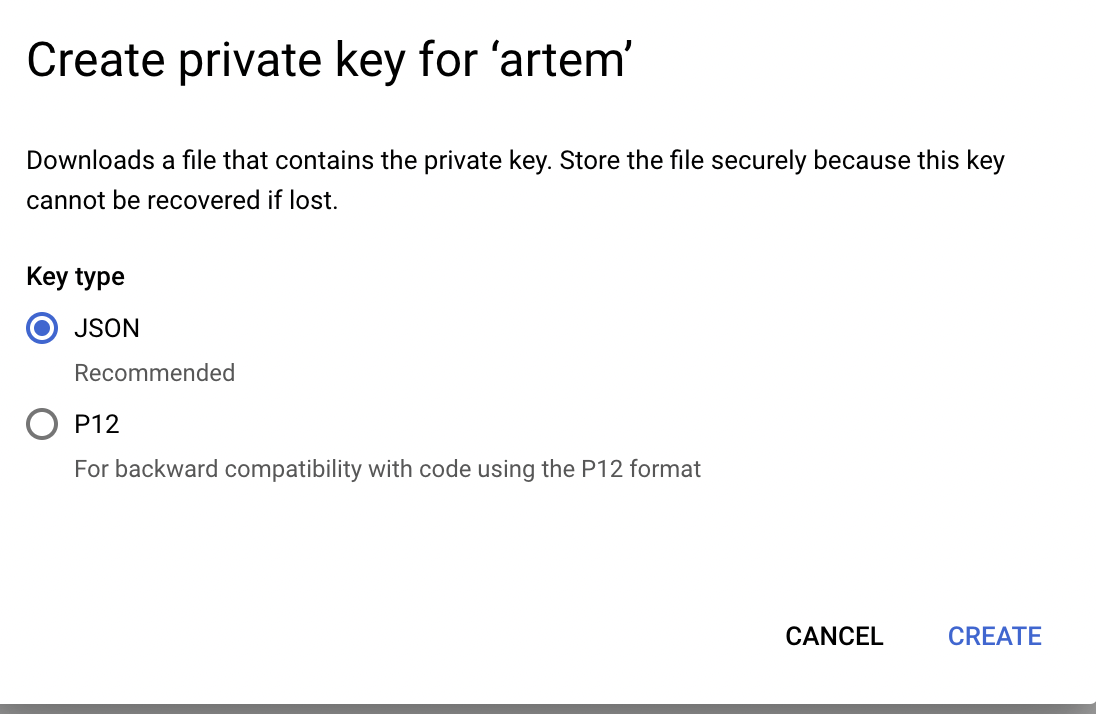

Add KeyandCreate new key, a pop-up will appear, selectJSONand thenCreate.

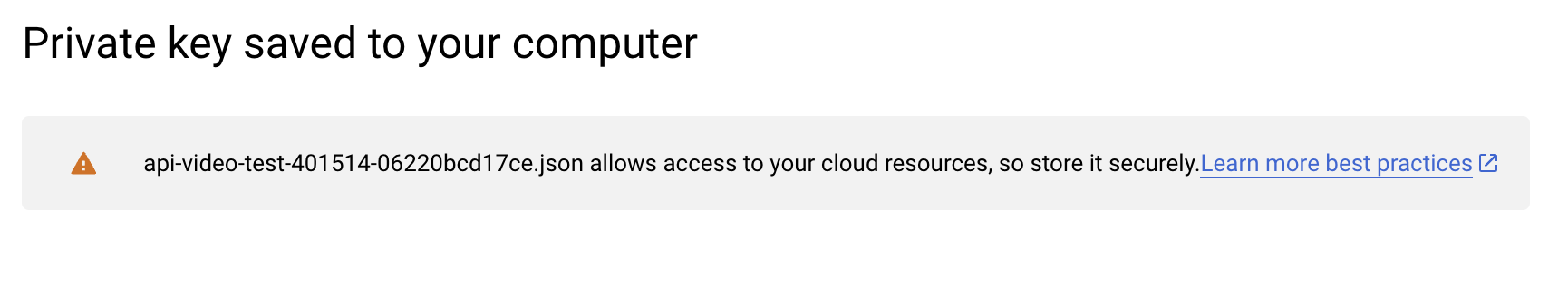

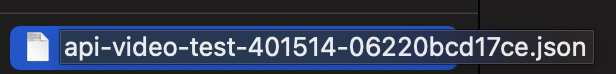

- This action will trigger a download of a JSON file that will storage on your machine/

- Copy the content of the JSON file

- Copy the content into the

.envin theGCP_KEYparameter. Note that the parameter should be a string, so encapsulate the JSON into''

Getting Started

After you’ve got all the keys and installed node.js, npm and typescript, you can proceed with cloning the script from GitHub.

Cloning the Cold Storage script

- In the command line enter the following

$ git clone https://github.com/apivideo/backup-cold-storage- Once the script is cloned, you can navigate the script directory

$ cd back-cold-storageSetting up the script

Once you are in the script directory, install the dependencies

$ npm installAfter the dependencies are installed, we will need to enter the credentials we have copied in the preparation phase.

Edit the .env file and replace the following with the keys you've received from Google and api.video.

# possible providers: google, aws, azure

PROVIDER = "google"

# api.video API key

APIVIDEO_API_KEY = "apivideo_api_key"

# the name of the bucket on Google Amazon S3 or the container on Azure Storage

SPACE_NAME = "google_bucket_name"

# Google credentails

GCP_KEY = '{ google json key

}'Don’t forget to save the file.

Running the backup

Once you've got all the keys in place in the .env file, it's time to run the script. As we are running the script on TypeScript, we will need to build it first, hence, the first command you need to run in the script folder is:

$ npm run buildAfter the script was built, it’s time to run it:

$ npm run backup